No more Wine - NetworkMiner in Linux with Mono

UPDATE

See our blog post How To install NetworkMiner in Linux for a more up to date installation guide.

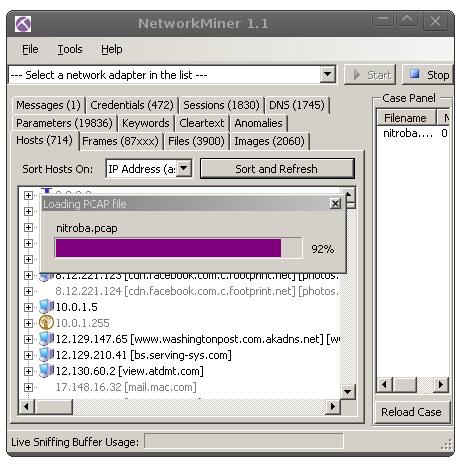

NetworkMiner is a network forensics tool

that is primarily designed to run under Windows.

But it is now (as of

version 1.2 of NetworkMiner)

also possible to run NetworkMiner on non-Windows OS's like Linux, Mac, FreeBSD etc. with help of Mono.

This means that there is no longer any need to run

NetworkMiner under Wine,

since Mono is a much better alternative.

NetworkMiner is a network forensics tool

that is primarily designed to run under Windows.

But it is now (as of

version 1.2 of NetworkMiner)

also possible to run NetworkMiner on non-Windows OS's like Linux, Mac, FreeBSD etc. with help of Mono.

This means that there is no longer any need to run

NetworkMiner under Wine,

since Mono is a much better alternative.

So what is Mono? Here is a description from the mono-project's website:

“Mono is a software platform designed to allow developers to easily create cross platform applications. Sponsored by Xamarin, Mono is an open source implementation of Microsoft's .NET Framework based on the ECMA standards for C# and the Common Language Runtime. A growing family of solutions and an active and enthusiastic contributing community is helping position Mono to become the leading choice for development of Linux applications.”(emphasis added)

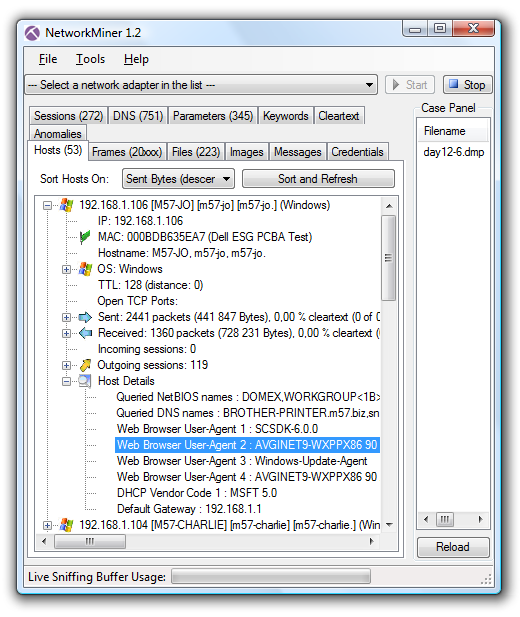

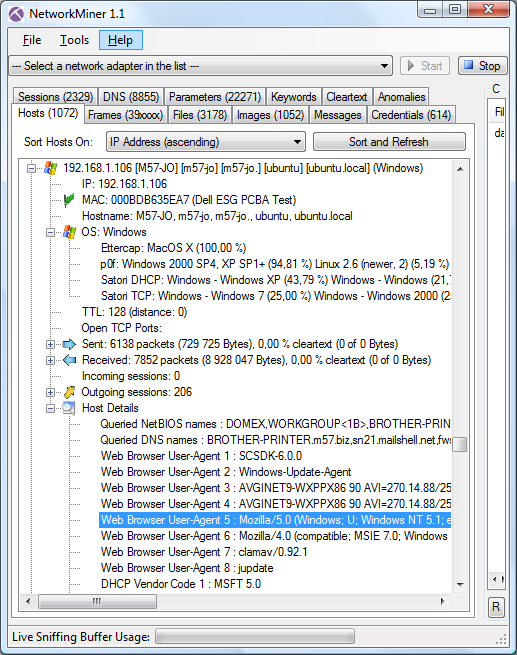

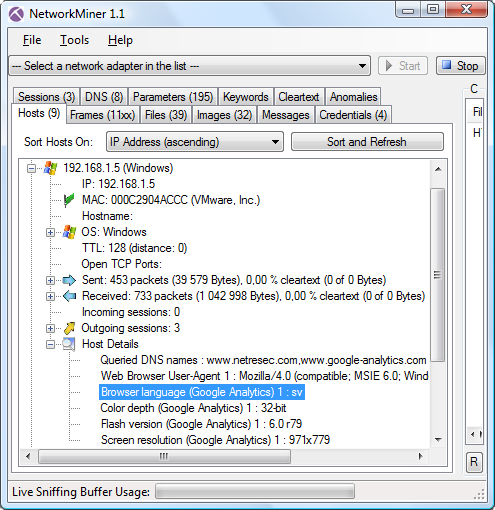

Here are some of the features in NetworkMiner that work better under Mono compared to Wine:

- Drag-and-drop pcap files onto NetworkMiner works under Mono

- Extracted/reassembled files are put in OS-native folders (under the NetworkMiner/AssembledFiles folder)

- Right-clicking an extracted file or image and selecting “Open file” or “Open containing folder” works under Mono

- No big Wine install required, the Mono framework only requires 32 MB to install

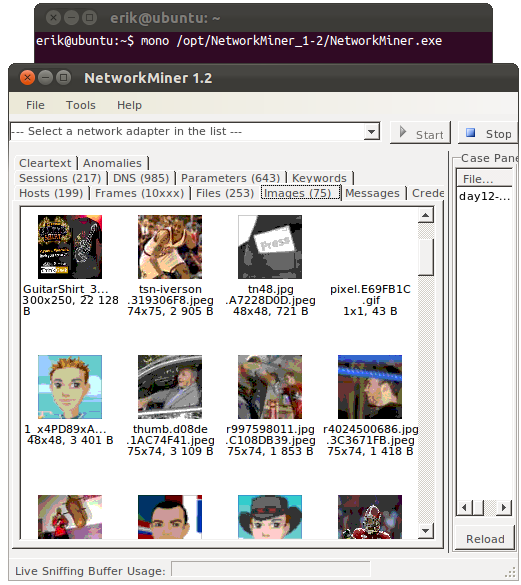

Here are the commands required to install Mono and NetworkMiner on Ubuntu Linux:

sudo apt-get install libmono-winforms2.0-cilThe reason for setting write permission to the AssembledFiles folder is because this is the directory to where extracted files are written. If you prefer to instead have the files extracted to /tmp or the user's home directory, then simply move the AssembledFiles directory to your desired location and create a symlink to it in the NetworkMiner directory (hat tip to Lenny Zeltser for this idea).

wget www.netresec.com/?download=NetworkMiner -O /tmp/networkminer.zip

sudo unzip /tmp/networkminer.zip -d /opt/

cd /opt/NetworkMiner_*

sudo chmod +x NetworkMiner.exe

sudo chmod -R go+w AssembledFiles/

sudo chmod -R go+w Captures/

mono NetworkMiner.exe

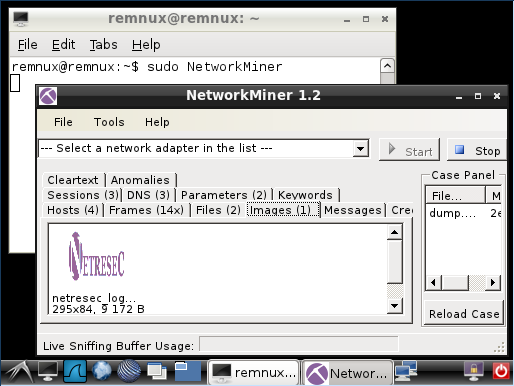

NetworkMiner 1.2 running under Ubuntu Linux with Mono, with “day12-1.dmp” from the M57-Patents Scenario loaded.

Update: See our blog post How to install NetworkMiner in Linux for an installation guide for other linux flavors.

Posted by Erik Hjelmvik on Monday, 26 December 2011 20:30:00 (UTC/GMT)

Tags: #NetworkMiner #Mono #Wine #Linux #Ubuntu