Erik Hjelmvik

,

Wednesday, 07 August 2024 11:40:00 (UTC/GMT)

Do you want to analyze decrypted TLS traffic in Wireshark or let an IDS, like Suricata, Snort or Zeek, inspect the application layer data of potentially malicious TLS encrypted traffic? There are many different TLS inspection solutions to choose from, but not all of them might be suitable for the specific challenge you’re facing. In this blog post I describe three different methods for decrypting TLS and explain when to use one or the other.

|

RSA Private Key |

TLS Key Log |

TLS Inspection Proxy |

| Works for all ciphers |

No (DHE cipher suites not supported) |

Yes

|

Yes

|

| TLS 1.3 supported |

No |

Yes

|

Yes

|

| Zero client configuration required |

Yes

|

No (pre-master secrets must be logged or extracted from TLS libraries) |

No (root CA certificate must be installed) |

| Decrypts traffic from any application |

No (most applications use modern ciphers with forward secrecy, which RSA doesn’t provide) |

No (each method for TLS key extraction typically only supports a specific set of applications or TLS libraries) |

No (apps that use certificate pinning or a custom certificate trust store cannot be intercepted without patching the app) |

| L7 traffic in PCAP files can be analyzed without decrypting TLS |

No |

No |

Yes

|

| Allows decrypted traffic to be mirrored to a network interface |

No |

No |

Yes

|

RSA Private Key

TLS decryption with a private RSA key was for a long time the preferred method for inspecting SSL and TLS traffic. This approach allowed anyone with access to the server’s private RSA key to decrypt the traffic and inspect the application layer (L7) communication.

The primary drawback with RSA private key decryption is that a stolen or leaked private RSA key can be used by an attacker to decrypt all previously captured traffic from that server, if RSA key exchange is being used. Modern TLS stacks have therefore deprecated such ciphers in favor of ones that support forward secrecy, which typically perform an ephemeral Diffie–Hellman (DHE) key exchange instead of reusing the same private RSA key over and over. This means that the RSA private key decryption method cannot be used if the client and server are using a key exchange algorithm that supports forward secrecy.

RSA private key decryption can only be performed when all these conditions are met:

- The protocol version is SSL 3.0, TLS 1.0, TLS 1.1 or TLS 1.2 (RSA was removed in TLS 1.3)

-

The server has selected a cipher suite that use RSA key exchange, such as

TLS_RSA_WITH_AES_256_GCM_SHA384,

TLS_RSA_WITH_AES_128_GCM_SHA256,

TLS_RSA_WITH_AES_256_CBC_SHA256,

TLS_RSA_WITH_AES_128_CBC_SHA256,

TLS_RSA_WITH_AES_256_CBC_SHA or

TLS_RSA_WITH_AES_128_CBC_SHA

- The private key matches the server certificate (traffic cannot be decrypted with a client certificate or an intermediate or root certificate)

- The session has not been resumed (the handshake must include a Client Key Exchange message)

This Wireshark display filter can be used to check if the server has selected an RSA cipher:

tls.handshake.type == 2 and tls.handshake.ciphersuite in {10,47,53,60,61,156,157}

You can check for a client key exchange message with:

tls.handshake.type == 16

A private RSA key can be loaded into Wireshark by clicking Edit, Preferences and RSA Keys. Another alternative is to use the command line tool tshark’s -ouat:rsa_keys switch like this:

tshark -r tls.pcap -ouat:rsa_keys:'"/path/rsa.key",""'

TLS Key Log

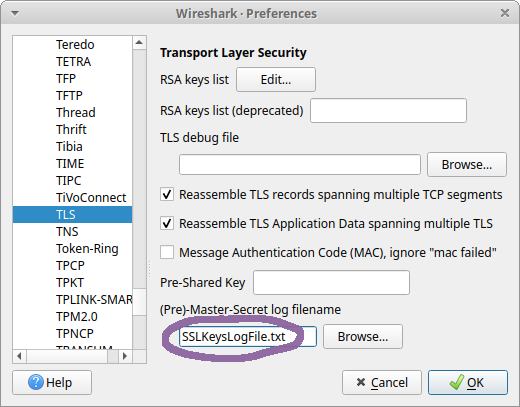

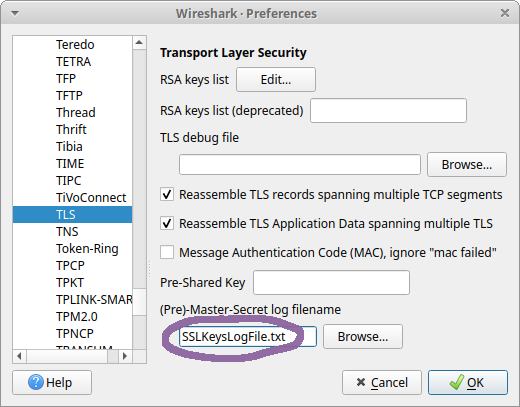

Wireshark can decrypt the TLS layer in captured network traffic if the pre-master secrets used to establish the encrypted connection are provided. These secrets, or encryption key material, can be loaded into Wireshark from an SSLKEYLOGFILE by clicking Edit, Preferences, Protocols, TLS, and setting the (Pre)-Master-Secret log filename to the path of your SSLKEYLOGFILE.

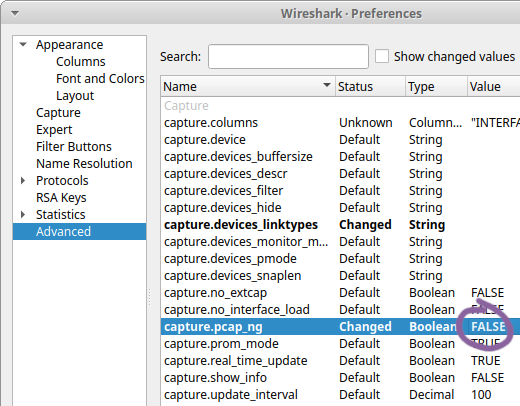

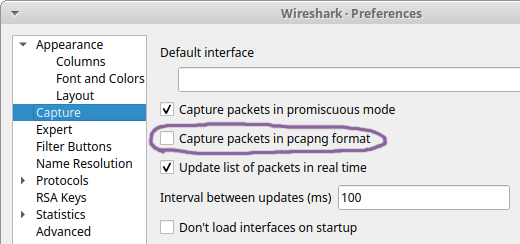

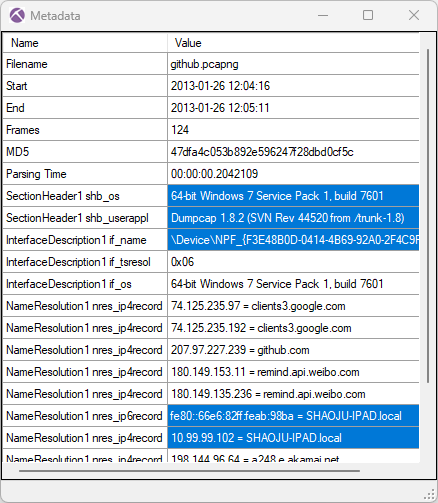

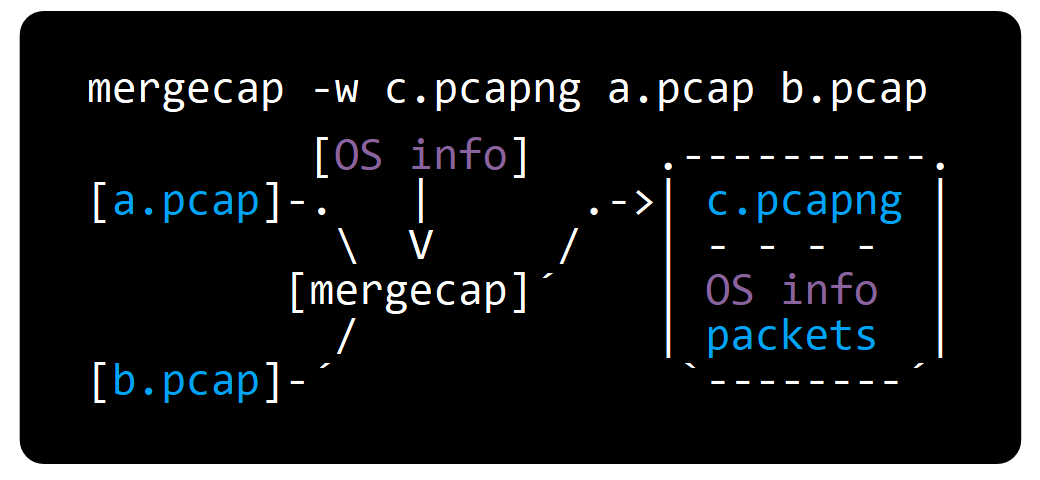

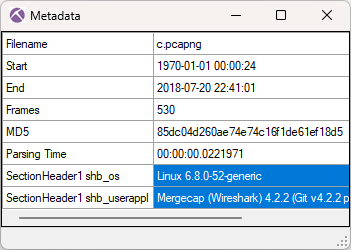

Another alternative is to encode the key material as metadata in a pcap-ng file with editcap like this:

editcap --inject-secrets tls,SSLKEYLOG.txt tls.pcap tls-and-keys.pcapng

The primary drawback with the TLS key log decryption method is that only Wireshark and tshark can be used to analyze the decrypted TLS traffic.

You also need to get hold of the keys or pre-master secrets in order to perform the decryption.

Some applications, such as Firefox, Chrome and curl, can be configured to export a key log.

Another alternative is to install an agent that extracts key material from specific TLS libraries.

The limitation of only being able to extract keys from a specific set of applications or TLS libraries makes the TLS key log method unsuitable for analyzing TLS encrypted C2 traffic from malware, which often use custom TLS libraries.

It is also difficult to send decrypted TLS traffic to an IDS or a network security monitoring tool using a TLS key log.

If you, on the other hand, want to analyze network traffic from your own Firefox or Chrome browser in Wireshark, then the TLS key log approach is probably the best solution.

TLS Inspection Proxy

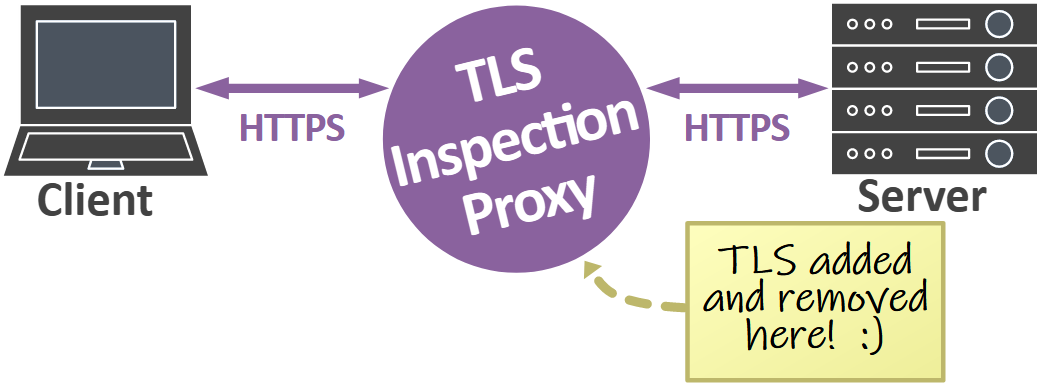

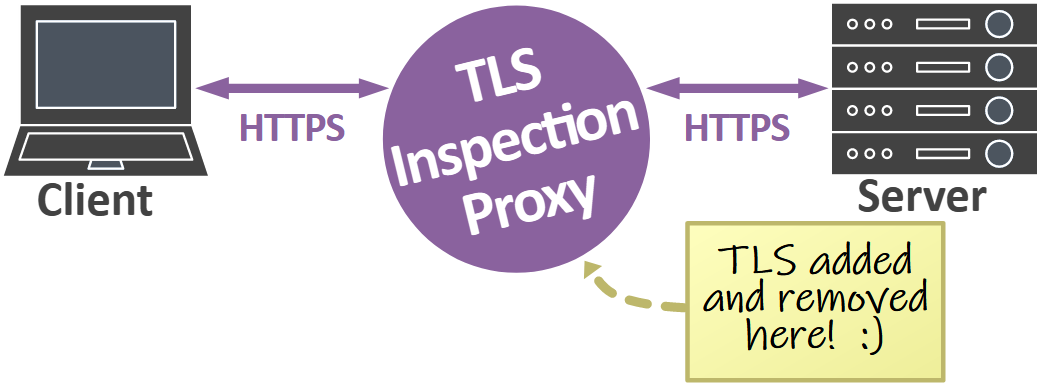

A TLS inspection proxy acts as a man-in-the-middle that intercepts and decrypts TLS traffic for inspection, it then re-encrypts the traffic and forwards it to the intended destination.

A major advantage of using a TLS inspection proxy is that decrypted TLS traffic can be analyzed from applications even if they use modern ciphers with forward secrecy and don’t support logging of TLS keys. The drawback, however, is that clients have to trust the root CA certificate that the proxy is using.

TLS inspection proxies often differ in how they make the decrypted traffic available to external tools, if at all. In fact, many TLS inspection proxies and Next-Generation Firewalls (NGFW) only make the decrypted payload available to the internal application or appliance. Such an approach prevents analysis of the decrypted traffic with an external tool, like Wireshark, Snort, Suricata, Zeek or NetworkMiner.

Another approach, used by proxies like mitmproxy, is to save a TLS key log for all proxied traffic. That approach allows captured TLS traffic to or from the proxy to be decrypted and inspected with Wireshark, but the application layer traffic cannot be inspected with other tools that don’t support TLS decryption using a key log.

The third and most integration friendly approach is to save the decrypted traffic in clear text form, so that other applications can inspect the unencrypted traffic without having to decrypt TLS. Some TLS proxies, like PolarProxy and SSLsplit, can even save the decrypted traffic to a PCAP file. Decrypted TLS traffic in PCAP format can easily be ingested into other tools or be replayed to a network interface for inspection by an external security appliance.

Best Practices

The list below can be used to select the best suited TLS inspection method for the particular challenge you’re tasked with.

I want to...

- Inspect traffic from my browser.

Use TLS key log to inspect traffic from Firefox, Chrome and curl. Use a TLS inspection proxy for other browsers.

- Inspect traffic to my HTTPS website.

Use RSA private key inspection if it is acceptable to use an older TLS version and less secure ciphers.

Use a TLS key log if your web server can be configured to export one or if you have an agent based key extraction solution that supports the TLS library used by the web server.

Use a TLS inspection proxy if you want to inspect the traffic with something other than Wireshark.

- Inspect potentially malicious TLS traffic with an IDS or security appliance.

Use a TLS inspection proxy.

- Inspect traffic from my operating system.

Use a TLS inspection proxy.

- Inspect traffic from my mobile phone, smart device or other embedded device.

Use a TLS inspection proxy.

- Inspect traffic from a proprietary game, app or service.

Use a TLS inspection proxy.

Posted by Erik Hjelmvik on Wednesday, 07 August 2024 11:40:00 (UTC/GMT)

Tags:

#TLS

#TLS Inspection

#PolarProxy

#SSLKEYLOGFILE

#Wireshark

#PCAP